There is often a noticeable disconnect between the media buyers analyzing ad metrics and creative teams designing the assets. Many teams find themselves stuck in a classic A/B testing loop, testing variables like button colors or headlines to see what won, but struggling to understand why it actually worked. Without understanding the mechanics behind a winning ad, ideation eventually runs out of steam, and budgets get wasted on endless tests that do not teach us anything.

I recently sat down with Vadim Stolberg, Founder of growth marketing agency dcbl, to discuss how teams can bridge this gap by graduating to true creative testing. Vadim argues that a data-driven approach should not act as a censor that shuts down unconventional ideas. Instead, data should fuel exploration and guide the creative process.

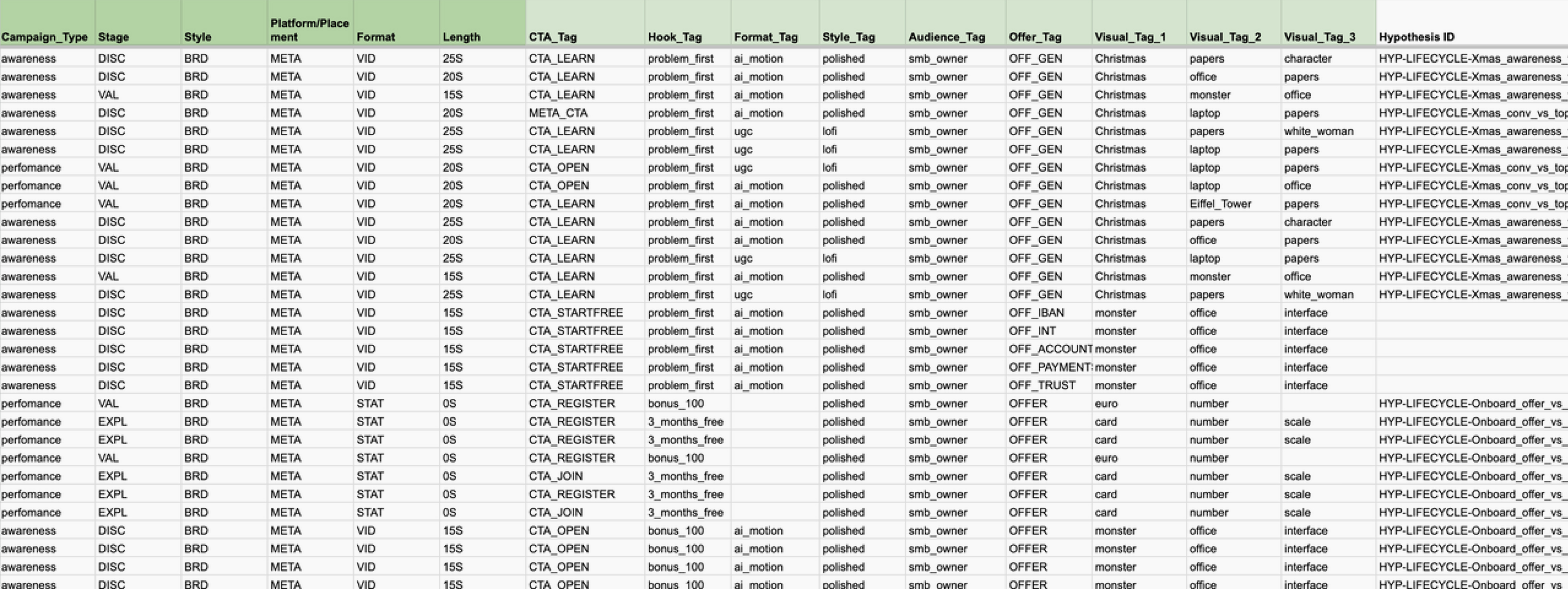

In this conversation, we explore how to transition to a hypothesis-driven creative cycle. We discuss the core variables you should be testing like personas, hooks, and angles, to generate reusable insights. We also break down how building a “Creative Registry” helps standardise your data, ultimately preparing your infrastructure so your team can benefit from AI aided workflows.

Key takeaways:

- Graduate from A/B testing to creative testing. Classic A/B testing only tells you what works, which is no longer enough. To build a scalable system, you need to test the mechanics behind the winners, such as personas, hooks, angles, and proof points, to understand why they succeed.

- Use data to expand, not censor. Data should not box creativity in; it should fuel exploration. Apply a portfolio approach to your budget: spend 70% to “exploit” variations of proven winners, and dedicate 30% to “explore” fresh hypotheses, angles, and unconventional ideas.

- Build a Creative Registry. A Creative Registry acts as your team's operational memory, turning your creatives into a standardized dataset that AI can eventually use to spot patterns and predict performance.

Part 1: the methodology (testing & data)

Scott: How do you ensure that a data-driven approach informs the creative process rather than stifling the “weird” or intuitive ideas that often produce the best hooks?

Vadim: There’s actually much less conflict between intuition and data than people think. At its core, intuition is just compressed data from past experience. The real issue with human intuition in performance marketing is that it’s subjective and not very consistent. To keep finding winning concepts, creative marketers need to constantly unlock new personas and unmet needs, and that’s exactly where a data-driven approach can meaningfully expand your field of view.

That said, in practice the balance is often off. Teams end up using data as a veto, instead of as a driver for discovering effective creative ideas. In a true experimentation culture, the assumption is the opposite: we don’t know the value of an idea until we test it. So the goal is to test systematically, ship fast, and kill fast, so you can move forward safely.

Practically, this means two things. First, data should fuel exploration, not act as a “censor” that shuts down unconventional ideas. Second, you need to move from “A/B testing to pick a winner” to creative testing to understand why. Instead of testing just buttons or colors, focus on meaningful variables like hypotheses, hooks, angles, proof points, personas, formats, etc. And third, set up your test so there’s a dedicated space for “pure” creativity with looser rules (usually 1-2 slots per ad set). That way you’re constantly injecting something fresh and unexpected into the system so it doesn’t just spiral into endlessly remixing itself.

Otherwise, there’s no real learning, and the creative process eventually collapses because guesswork ideation runs out of steam.

The portfolio rule helps here, splitting your budget across two types of work:

- Exploit (≈70%). Variations of what’s already proven and understood. Here, historical data leads, and creative is used to extend and scale success.

- Explore (≈30%). Testing new hypotheses, angles, hooks, visuals, and formats. Here, creativity leads, supported by R&D from both external signals and internal insights.

In the end, data shouldn’t box creativity in. It should guide it, enrich it with hypotheses, and help refine it over time. And with the right balance, you avoid wasting budget on endless tests that don’t actually teach you anything.

Scott: You distinguish between standard A/B testing and “creative testing.” For a team that is stuck in the mindset of testing button colors or headlines (standard A/B), what needs to happen for those teams to graduate to true creative testing?

Vadim: First, the mindset has to change. The usual path to that shift is pretty straightforward. The old approach (where the main unit of testing is the creative asset itself) stops working, and there’s no proper hypothesis-driven system in place yet. That’s when it starts with curiosity, and with the questions teams begin asking themselves:

- Why does one creative work while another doesn’t?

- What should I do to replicate success?

- How can I get more winners without hurting profitability? (win rate)?

A strong creative testing strategy should be built to answer exactly these questions. Classic A/B testing only tells you what works and what doesn’t, which is useful, but no longer enough. What really matters now is learning, because that’s what fuels the entire creative cycle.

Scott: A common struggle is seeing what won but not knowing why. When designing tests, what specific questions should we be asking to ensure we get a learning outcome that informs the next batch of creative, rather than just a simple winner/loser result?

Vadim: To generate insights you can actually reuse, your tests need to be framed around questions that help you uncover the mechanics behind winners. A few core ones to focus on:

- Persona. Which audience barriers and drivers best match your product’s features and benefits?

- Hook. What actually stopped the scroll. A pseudo-interactive pattern, unexpected honesty, a clear value promise, a promo, a standout visual, etc.?

- Angle. What “reason to believe or buy” worked - saving money, status, safety, belonging, simplicity, speed?

- Proof. What removed doubt - product demo, testimonial, hard numbers, competitor comparison, before/after, UGC-style recommendation?

- Funnel. Where did the win actually happen? Top of funnel (attention/click), bottom (post-click conversion), or across the whole journey?

To answer these properly, you need to break creatives down into components, which is where a solid tagging system and taxonomy come in.

Scott: Can you walk us through what a hypothesis-driven creative cycle actually looks like in practice? Do you write these down? How formal does it need to be?

Vadim: If you’re moving to a hypothesis-driven creative cycle, a few things need to exist:

- Hypothesis Log. A single place where you track what you’re testing and why. Every creative should be tied to a hypothesis from the start. At least until it’s validated.

- Test Design. How you structure the test, e.g. asset mix, attribution window, success metrics, stop rules, and what a “good result” actually looks like.

- Learning Record. What you learned (in the form of rules or patterns), and which signals get fed into the next R&D cycle.

Scott: Beyond the obvious ROAS or CPA, are there specific “secondary” metrics that you prioritize when diagnosing creative fatigue versus audience fatigue?

Vadim: You can say that ROAS and CPA are generally too “slow” to manage creative effectively. But there are nuances. For example, with some subscription apps, conversion to purchase happens quickly and cheaply. In those cases, CPA can already be your primary metric at the very first stage of testing.

In many other cases, though, pushing tests to 50 conversions (as Meta recommends) becomes expensive. That’s where it makes sense to review:

- Testing new hypotheses. Focus on CTR and hook rate. CPA still matters, but it plays a slightly smaller role at this stage.

- Testing variations. CPA becomes more important, and you also bring in secondary signals like tags that help you evaluate the specific elements you’re testing.

As for diagnosing the type of fatigue (creative vs. audience), here are the key signals:

Creative fatigue signals:

- Early attention metrics drop (CTR, and for video, hook rate), while everything else stays the same.

- CPA increases alongside rising frequency for a specific creative.

- Performance improves when you introduce new creatives of similar quality to the same audience.

Audience saturation signals:

- CTR stays stable, but CVR drops and CPM increases.

- Creative refreshes have little to no impact.

- You need to expand or rebuild audiences and/or rethink the offer.

Part 2: the infrastructure (structure & automation)

Scott: You encourage a “Creative Registry” as a tool for structure. Is this essentially a way to tag and track attributes (like “UGC,” “Humor,” “Educational”)? How does building this registry help automate the decision-making process later on?

Vadim: A Creative Registry is basically your operational memory. A system that captures what worked, why it worked, and how it worked, in a format you can actually use (and automate later).

It solves a few key things:

- Managing the creative cycle. For every creative, you don’t just see performance (assuming it’s connected to your ad accounts), but also all its internal components, and you can analyze them.

- Hypothesis building and validation.

- A constant source of new signals for ideation.

- Data standardization.

On its own, a registry doesn’t do much. But once you start using it to analyze relationships between tags, conversions, and both valid and invalid hypotheses, it becomes a central hub of context and knowledge about your creative system.

At that stage, we bring in AI agents (both our own and external ones) connected to the registry. This setup helps you speed up, scale, and reduce the cost of not just creative analysis, but also the ideation that comes after.

Scott: In the creative cycle, which parts should never be automated, and which parts are you happy to hand over to the machines?

Vadim: Right now, I wouldn’t fully hand over these parts to automation:

- Hypothesis creation and prioritization. They’re deeply tied to product context and overall strategy, so human judgment still matters a lot here.

- Pure ideation. Humans can still compete with machines creatively. As I mentioned earlier, automation should expand ideation, not replace it.

- Production. In some cases, even for performance creatives, the tools for control just aren’t there yet, or AI production ends up being more expensive than traditional methods. And when it comes to authenticity, the value (and price) of creator-driven content might actually increase as AI becomes more widespread.

- Managing the cycle itself. If you don’t understand how your creative cycle works internally and rely entirely on automation, it’ll be hard to adapt to new challenges going forward.

That said, never say never. Technically, you can already automate almost the entire cycle. The real question is how satisfied you’ll be with the results right now.

Scott: I believe teams need to be “AI Ready” (good data hygiene) before they can be “AI First.” How does a structured Creative Registry help a team become “AI Ready?”

Vadim: To consistently produce creatives that actually perform, you first need to get your infrastructure in order, and that starts with structuring data across all your sources. A Creative Registry, as the central hub for collecting and analyzing creative data, is exactly about that.

It does three key things:

- Standardizes your data.

- Creates a feedback loop.

- Turns creative into a dataset.

At that point, AI can actually start to work properly in:

- Spotting patterns.

- Predicting performance.

- Generating informed creative.

And most importantly, this becomes your unique creative system. Built on your specific context, enriched with both internal and external data and approaches.

That’s when you start seeing real gains like lower costs and higher win rates.

And to really go “AI First”, there’s just a little more to do. Run the same playbook across automation, ops, finance, etc. and stitch it all together into one connected layer.

So yeah… plenty of work still ahead of us!

Part 3: The reality check (challenges & blockers)

Scott: One of the biggest blockers most of us see is the disconnect between “data people” (media buyers) and “design people” (creatives). How do you use data to bridge that gap? How do you present performance data to a designer without killing their morale?

Vadim: I think the foundation of good collaboration here is a shared language and a synchronized process across the team:

- Shift from judgments to mechanisms. There’s a big difference between saying “CTR is low, we need new ideas” and saying “Here’s the attention curve across hooks, here’s where people drop off, and here are the angles we haven’t explored yet - let’s come up with a different entry point together.”

- Treat everything as one connected cycle. R&D, ideation, test design, analysis, measurement, signals - these aren’t separate silos, they’re all parts of the same loop. They’re so interconnected that anyone responsible for results simply can’t afford to be stuck in their own subjective taste.

When you create shared touchpoints across the entire cycle, and give people visibility into and influence over each other’s decisions, that’s what really drives strong collaboration.

Scott: What is a common “bad process” you see creative teams trying to automate that they should fix manually first?

Vadim: The most common bad process is when a team hasn’t yet shifted its mindset or infrastructure to a hypothesis-based approach, but has already started automating production.

That’s when all the magic of data and analysis disappears. You end up generating tons of assets with no tagging system and no learning record. And instead of scaling performance, you’re just scaling entropy.

Scott: With the rise of ad partnerships, brands are relinquishing control for the sake of authenticity. What is the main challenge you see brands facing when they try to integrate this “lo-fi” content into a highly structured, data-driven testing roadmap?

Vadim: Authenticity is a key attribute of creator content on platforms. It can (and should) feed directly into your hypotheses.

If you’re using a full-funnel creative strategy, the top of the funnel is usually more hi-fi, branded content. As you move down the funnel, the value of authenticity increases. To avoid losing control completely, you can add some structure through:

- Brand guardrails. A set of non-negotiables (brand codes, do’s & don’ts, legal constraints)

- A GET (Good Enough to Test) checklist. A kind of “red line” of minimum requirements a piece of content needs to meet before going into testing.

- Add extra tags. Like “lo_fi” and “partnership_ads” into your registry, and then track not just at how this type of content performs vs others, but also how a more native approach impacts both brand and overall performance across different platforms.

The key is that these shouldn’t restrict the creator, just guide them.

Scott: What’s the bigger challenge you see, scaling ad ideation or production?

Vadim: The main bottleneck today isn’t production, it’s ideation. With modular approaches and a well-set-up AI stack, you can generate dozens of variations within a single hypothesis pretty easily.

What really drives creative quality (i.e., performance) is the number of hypotheses you’re able to test. The more hypotheses you validate, the more future winners you unlock.

Scott: When a team decides to shift from a chaotic creative process to a structured, hypothesis-driven one, where is the most friction usually found?

Vadim: The biggest friction usually comes from this perceived trade-off between “beauty” and “performance.” In reality, a data-driven approach, once a team starts actually using it, helps direct creativity toward business growth.

When that clicks, you get a real shift in identity from “I make beautiful things” to “I build hypothesis systems.”

Here’s how I’d map where the biggest friction showed up on our path to transformation:

- Fear of losing control. It often feels like at some point we’ll have to hand everything over, including creative decisions, to the machine. And that fear makes people push back on automation altogether. What to actually do about it is probably the hardest part. Personally, I believe that as long as there are humans on “that” side, they’ll always care about the human on “this” side. So the role of automation isn’t to replace creativity, it’s to expand what people can come up with.

- KPI conflicts. Friction shows up when people inside the company move at different speeds and look at performance through completely different lenses. The fix is to align on what success actually looks like and where you’re heading, so everyone can move at their own pace but still stay on the same journey.

- No clear owner of the creative cycle (and no one driving discipline). It’s incredibly easy to fall back into old habits in the day-to-day. You need someone in the team who owns the creative cycle end-to-end, and someone who keeps everyone on track and reminds them what process they’re actually in. That second role, by the way, could easily be another AI agent.

Vadim’s recommended resources

To close our interview, here are a few additional resources and notes from Vadim on what’s keeping him inspired!

- [Book] “Click Here: The Art and Science of Digital Marketing and Advertising” by Alex Schultz (Meta’s CMO)

- [Resource center] Research @ Anthropic. They publish pretty much daily, a great source of ideas (a lot coming from Dario Amodei)

- [YouTube channel] Andrej Karpathy’s channel, especially the Neural Networks: Zero to Hero playlist