Launch structured creative tests and track what's working in one place with Bïrch Launcher and Explorer.

If you’ve tried to research how many creatives to test, you’ve probably seen the same answer come up repeatedly. “It depends.” And, of course, it does. But on what? And how do you use your data to inform your ad creative testing?

In most cases, you should be testing 2 creatives at a time on a small budget, 3–5 once you have a defined audience, and 6–10 or more per week as you scale. The number is based on what your budget, goals, and account can support.

In this article, we’ll break down what drives that number and give you a framework you can apply to your own account.

Key takeaways

- Test volume should match your stage: 2–3 creatives on a small budget, 3–5 with a defined audience, and 6–10+ per week once you’re scaling.

- Each creative needs enough conversions to be judged, so testing too many at once with limited budget leads to weak, unreliable data.

- Budget is the main constraint: aim to spend about 2–3x your target CPA per creative before deciding if it works.

- Keep new creatives ready as performance changes, especially in higher-spend accounts where frequent refreshes support steady results.

- Bïrch can turn testing into a repeatable system, so you can launch more creatives and keep learning as your account scales.

Why the number matters more than most teams think

Testing works when you have enough variation in your creatives to compare and enough data to see a pattern. In practice, that comes down to how many conversions each creative actually gets.

If you test too few creatives, you might find something that works, but you won’t have enough context to understand what made the difference. On the other hand, every ad set is working with a limited number of conversions that get split across all the creatives you’re running. So, if you’re generating 10 conversions and testing 5 creatives, each one is only getting around 2 outcomes. That’s not enough data to understand performance, which is why results can feel inconsistent or hard to trust.

The variables that determine how many creatives to test

To decide how many creatives to test, focus on these factors:

Budget

Your budget determines how much spend each creative can realistically get.

A simple way to estimate spend per creative is to look at your CPA. In most cases, you need to spend around two to three times your target CPA per creative before making a call.

If your budget can’t support that across all creatives, test fewer and give each one enough spend to produce a clear signal.

Account maturity

New accounts test more variables because they don’t yet have enough data to understand how people respond. There’s no clear baseline, so broader testing is needed.

As the account matures and data accumulates, testing focuses on variations of proven concepts so you can refine and scale them.

Campaign objective

What you’re optimizing for changes how much data each creative needs before you can make a decision.

With awareness or engagement campaigns, you get feedback quickly through reach, clicks, or video views—so it’s easier to run more creatives at once.

With conversion-focused campaigns, it takes longer for each creative to generate meaningful results. You need actual purchases or leads before making a call, so each creative needs more spend.

Platform

Platform behavior affects how many creatives can actually get enough data to be evaluated.

- Meta: Delivery quickly concentrates around a few ads, so adding more creatives doesn’t mean they will all be tested.

- TikTok: Faster feedback and quicker fatigue mean you can cycle through more creatives over time, but each still needs enough spend to prove itself.

- Google: Testing happens at the asset level, so the focus is on providing enough variation for the system to build and compare combinations.

Testing goal

Are you trying to find a winner, or understand why something works?

If you’re trying to find a winner, you’ll typically test more creatives at once to increase your chances of finding something that performs. If you’re trying to understand why something works, you’ll test fewer creatives with more controlled variations so you can isolate what’s actually driving performance.

A practical framework: how many creatives to test by situation

Small budget or early-stage account

If you’re working with a smaller test budget, typically in the $300–1,000 range, keep the number of creatives low. In most cases, that means testing 2–3 creatives at a time. Change one variable between them, like the hook or angle, so you can see what’s actually driving performance.

At this level, spreading budget across more variations usually backfires. Each creative needs enough spend to generate real outcomes. If you try to test too many at once, you risk none of them getting enough data, and you could end up making decisions on noise.

Mid-stage account with a defined audience

At this point, you may have enough data to stop testing blindly, but not enough to scale aggressively. So, run 3–5 creatives, each testing a distinct angle or execution.

The goal here is comparison, so the creatives you test need clear differences. The risk is adding too many variations, especially with smaller audiences, because performance will flatten as the budget gets spread too thin.

Keep them in rotation and refresh as soon as you identify fatigue. The next set should be ready before results start slipping.

Scaling account or high-volume program

At scale, typically $50K–100K+ per month and above, creative testing becomes part of the regular strategy. This is common in high-spend ecommerce, DTC, or fast-moving paid social accounts where performance depends heavily on creative output.

In these cases, it’s common to launch 6–10 new creatives per week at higher spend levels. That can increase to deploying multiple new creatives per day.

At this level, creatives fatigue quickly, often within 2–3 days, so performance depends on how quickly you can replace what stops working. New creatives should already be in rotation before results start to drop.

As volume increases, you need to be more deliberate. Each new creative should be based on a proven concept, and one clear variation should be introduced so you can keep learning while maintaining performance.

Focus on the creatives you’re testing, not just how many

Beyond how many creatives you’re testing, you want to make sure your tests actually give you something useful. Finding a winner is part of it, but the real value is understanding why it worked. What did your audience respond to? That’s what you build on next.

To get there, the differences between your creatives need to be clear. Change the hook, angle, format, or offer so you have something real to compare. Minor differences just increase how many ads you have to look at.

As spend increases, this becomes more important. Each creative should reflect a clear idea you’re trying to validate, like how you position the product or what problem you lead with.

For a deeper breakdown of how to structure these tests, read our guide to building a creative testing framework.

How to test more without it becoming unmanageable

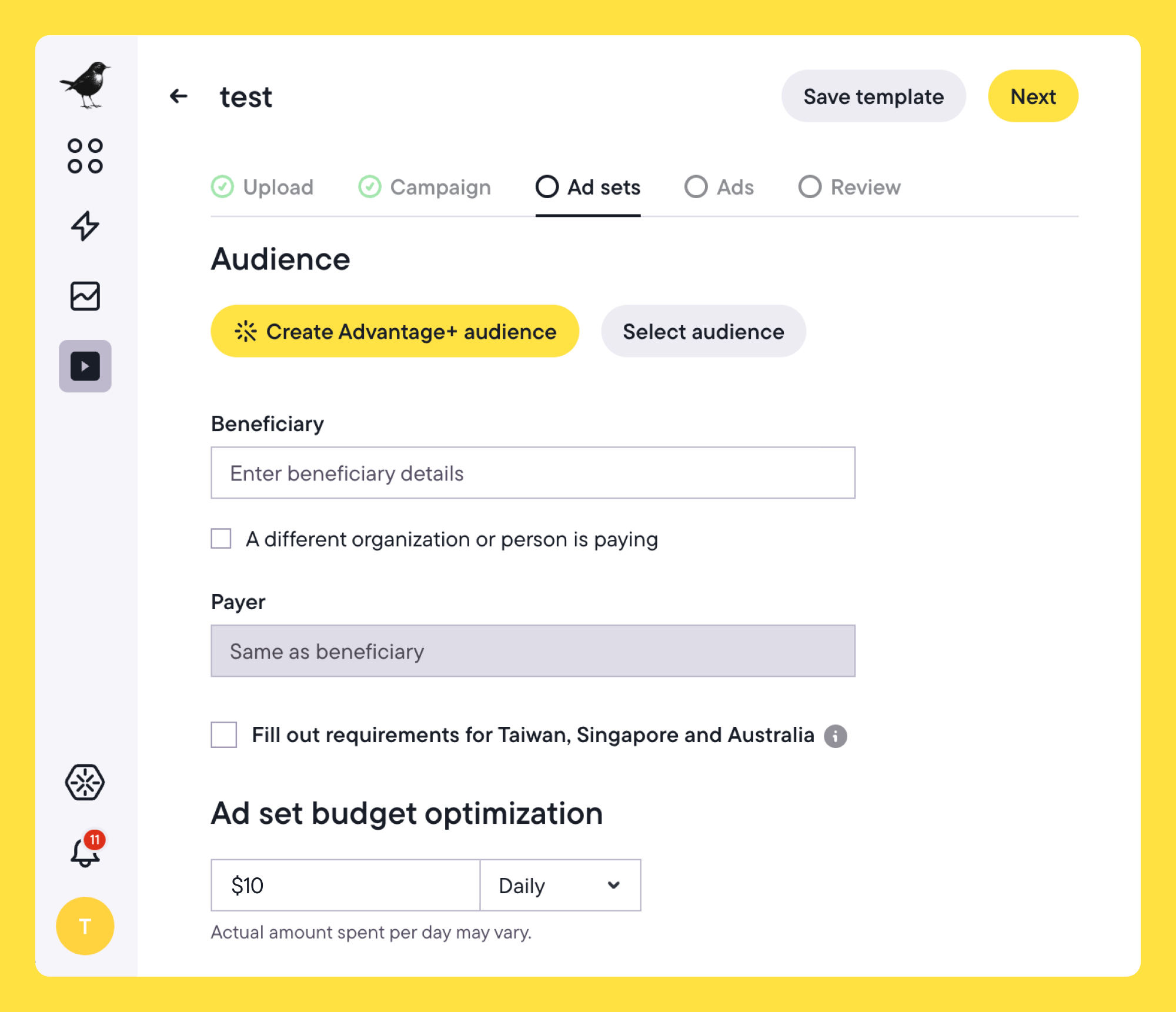

For reliable results, each creative needs to run under the same setup, audience, budget, and timing. Doing that manually across multiple variations slows everything down and limits how much you can actually test.

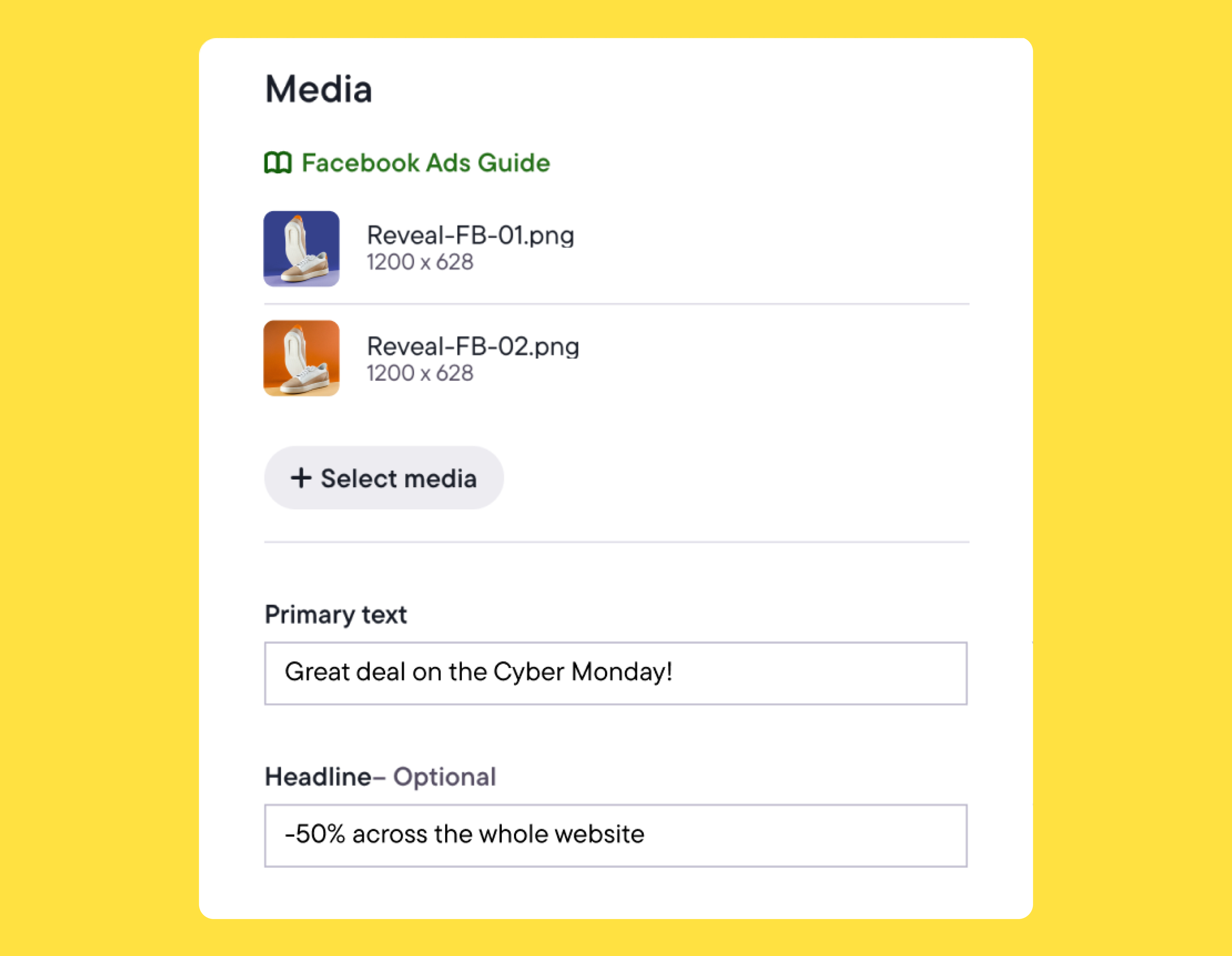

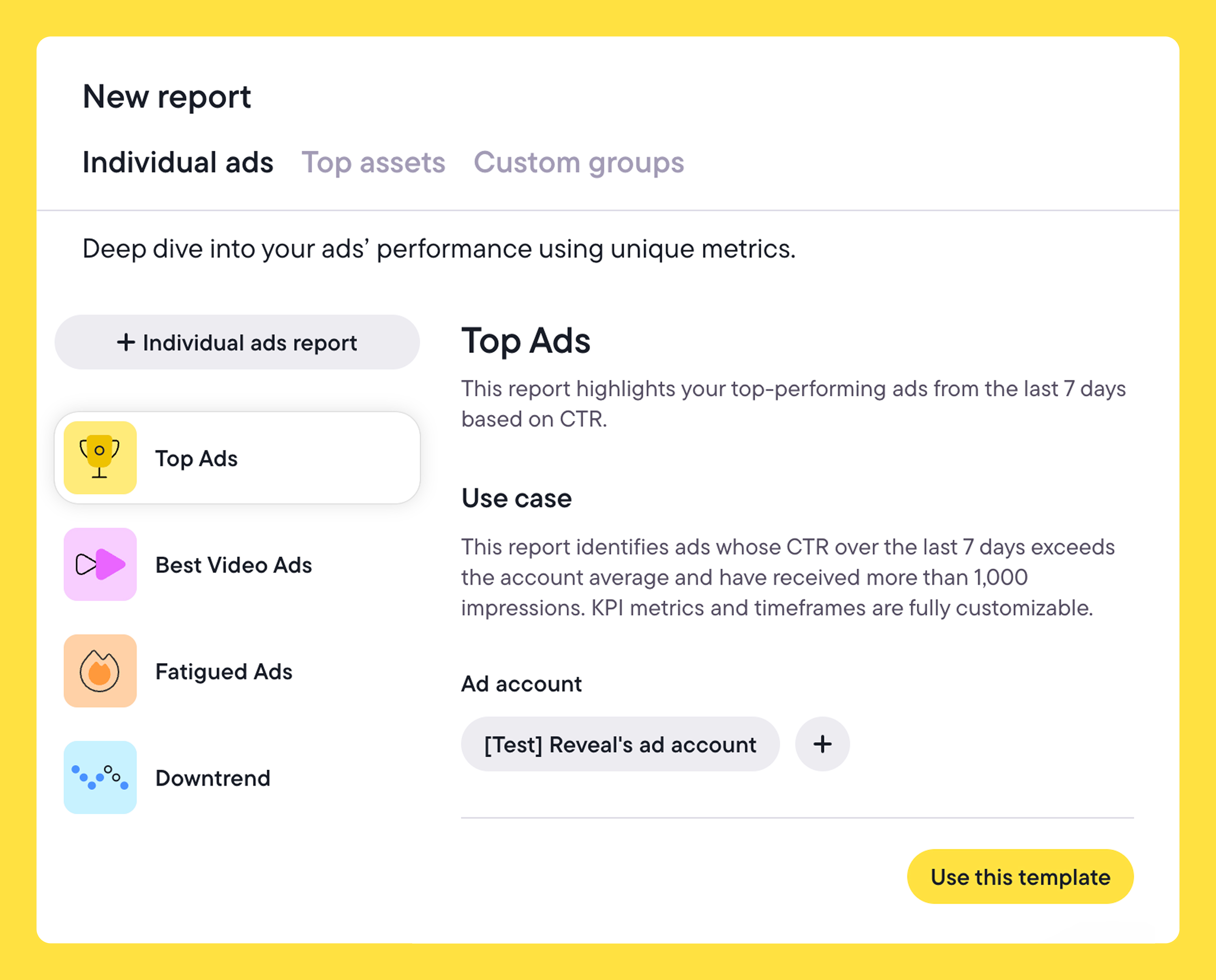

In Bïrch, Launcher lets you upload multiple creatives at once, generate variations from them, and launch everything under the same conditions in a single workflow. Instead of setting up each test individually, you define the structure once and apply it across all creatives.

Making sense of the results

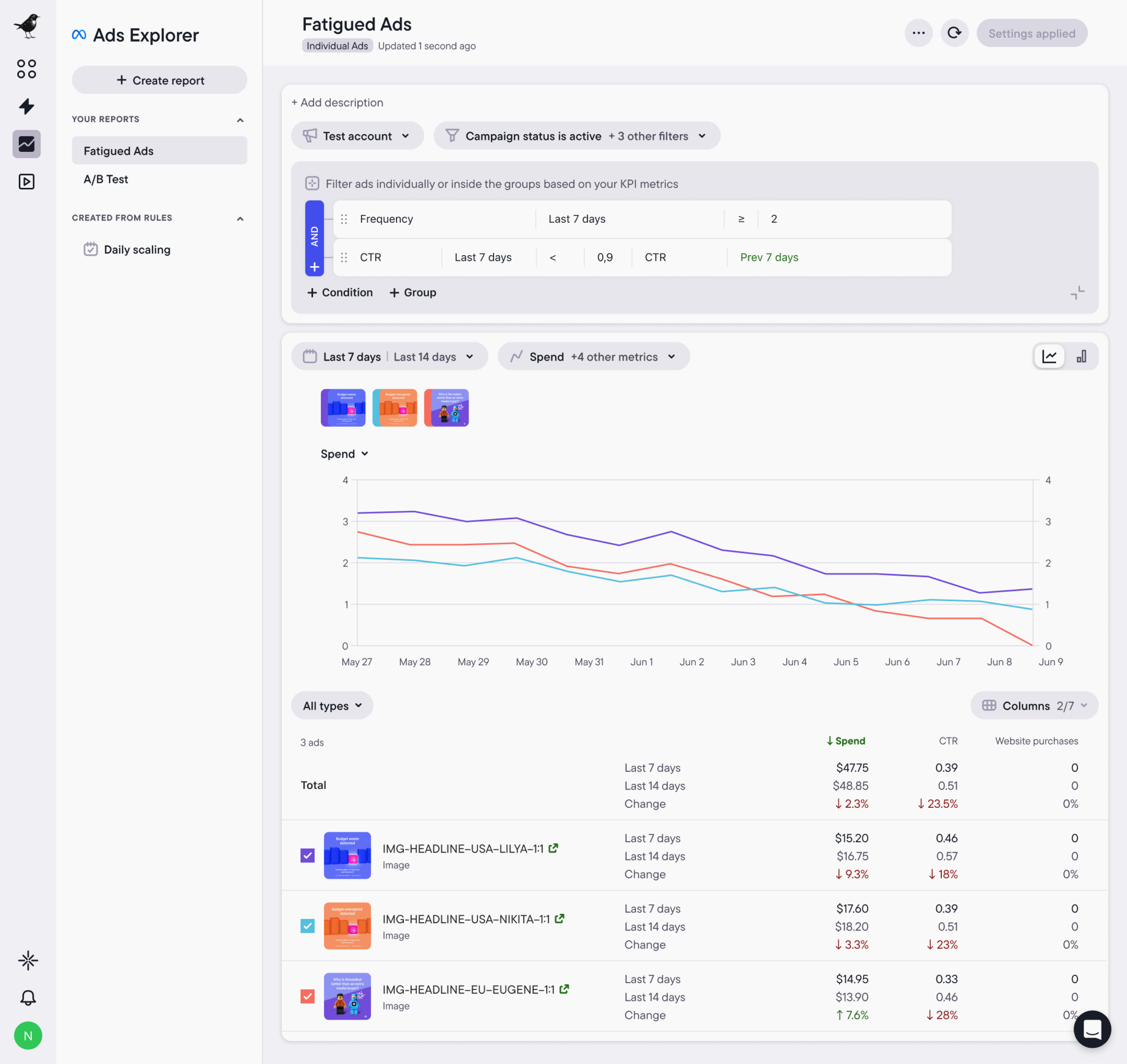

As you test more creatives, you start to notice what’s working. But with a high volume of creatives, those patterns get harder to spot as results spread across campaigns and accounts, making them difficult to act on without a strong, repeatable system.

In Bïrch, Explorer pulls performance into one place so you can see how creatives are doing across campaigns and which ones are starting to drop off. You can group results by your own naming and compare variations without digging through individual ads.

That makes it easier to build on what’s already working and carry it into the next round of testing, especially when Explorer is used alongside Launcher to keep that process consistent.

The number grows as you scale

There isn’t a fixed number of creatives to test, but there is a right number for your account. It comes down to how many you can actually run, track, and learn from.

As your testing becomes more structured and easier to track, the number naturally increases. What feels like too much at one stage becomes manageable at the next, and most teams can test more than they think once the structure is in place.

Bïrch is built to support that process end to end. You can try Launcher and Explorer with a 14-day free trial.